Iurii Dziuban

IuriiD

Cherkasy, Ukraine

https://github.com/IuriiD/

iurii.dziuban@gmail.com

Menu:

Projects

Knowledge

Interests/About me

Working at

This is the place where I sum up my learning progress, side projects and useful links.

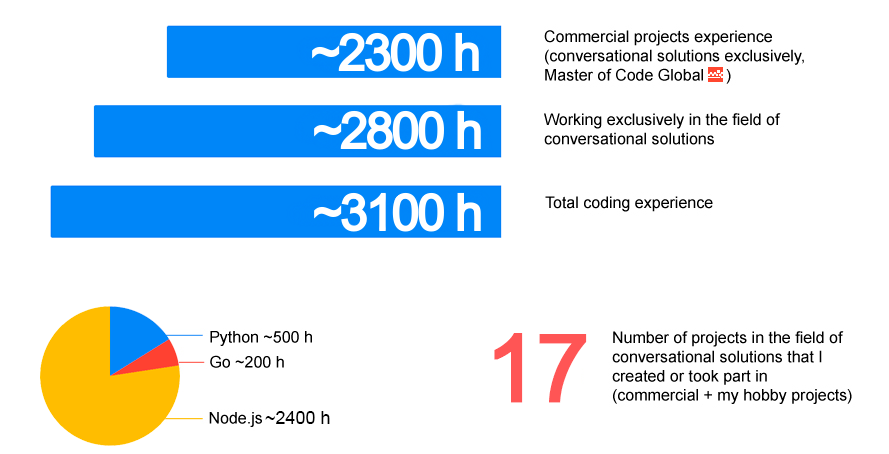

My current coding experience at a glance (as of January 2020):

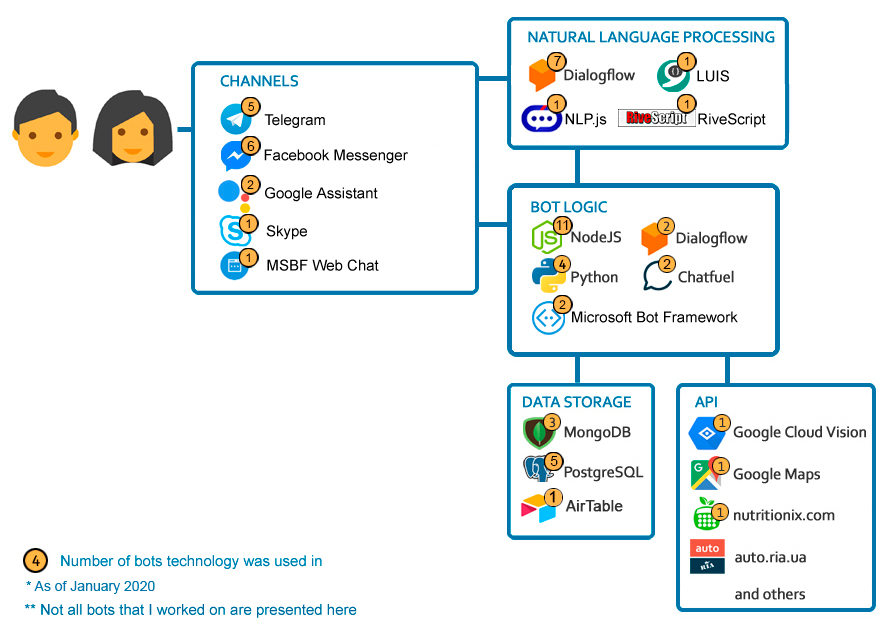

My current tech stack in regard to chatbots (based both on commercial and side-project experience)

I'm gradually covering the full stack of chatbot development, including:

- Idea generation/Problem solving, defining requirements

- Design (choosing architecture, designing conversation flow, choosing/preparing the content, graphical/functional prototyping)

- Development (implementing bot logic, data storage, NLU, 3rd party integrations, custom features, bot-to-human handover etc)

- Deployment

- Follow-up (metrics/statistics, alerts etc)

Projects

Below you can see the side/hobby projects that I've done before being hired and in parallel to my work

Playing with Dialogflow API, I created a simple chatbot which can be "taught" (expanded through adding new intents) during the dialogue with this bot. That is if a bot doesn't understand something, it asks if it should store this phrase with a corresponding response as a new intent. Thus this bot can be expanded in a rather "natural" way, through the dialogue (as people do ;). Please see the detailed video tutorial about how to recreate such a bot.

This is my first modest experience with hardware stuff. Using Dialogflow and node.js I created a simple chatbot which encodes user's input into Morse cypher. This bot returns dots and dashes to the Web Demo form and in parallel makes a ESP32 board to blink the encoded message with a diode. The code for the board was written on JavaScript using ESPRUINO framework. Please see the detailed video tutorial about how to recreate such a bot by yourself.

My company, Master of Code has many cool traditions. One of them is Secret Santa - this is when each team member is assigned a random person to whom he/she prepares a New Year present. And then there's a party with festive distribution of this gifts. This year (that is for NY 2020) I decided to prepare something more than just a present and created a small IT quest which included a chatbot.

Please see this step-by-step tutorial showing how it was done and feel free to use this idea/bot with your Company and/or friends. P.s. The idea of a quest was inspired by this topic on Reddit about Secret Santa and The Architect (user squeakysqueakysqueak).

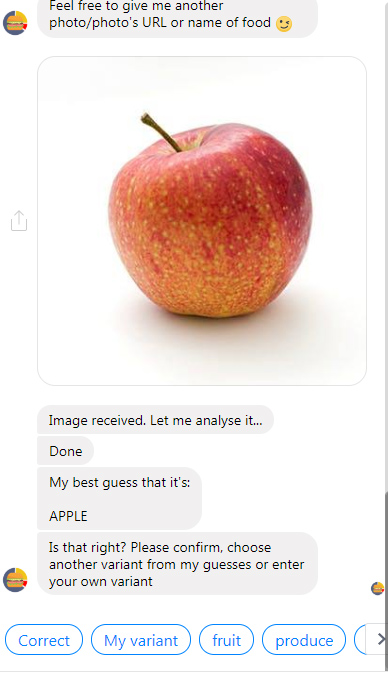

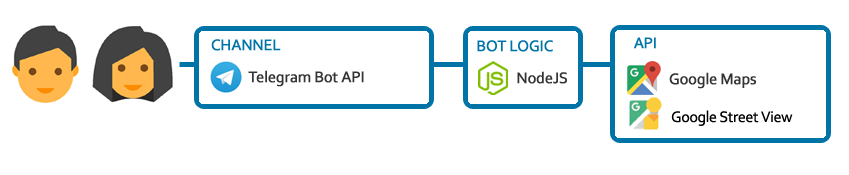

I decided to create a city quest in the format of a chatbot. But this time I decided to go further and try to create a detailed video tutorial on how I'm currently creating my chatbot hobby projects. In this series of 11 videos (~2h in total) you can find a step-by-step tutorial which is a summary of about 150 hours spent by me on this project (during 2019).

I'm describing how I've got the idea of a city quest chatbot and my reasoning of using chatbot format for such a project, selecting tools (Chatfuel, node.js, Glitch, Airtable, Google Vision API), preparing the contents/scenario for the quest, composing the bot's flow in Chatfuel, writing webhooks on node.js on Glitch (including simple image "interpretation" using Google Vision API), using Airtable to store users' data etc. In the prelast video there's a screencast of passing the quest.

I share the webhooks code (as a Glitch remix) and can send collaborator invites on Chatfuel for you to access the bot's flow (the Facebook Messenger bot is not public so far).

Here are some of the videos as an example (please see the full playlist):

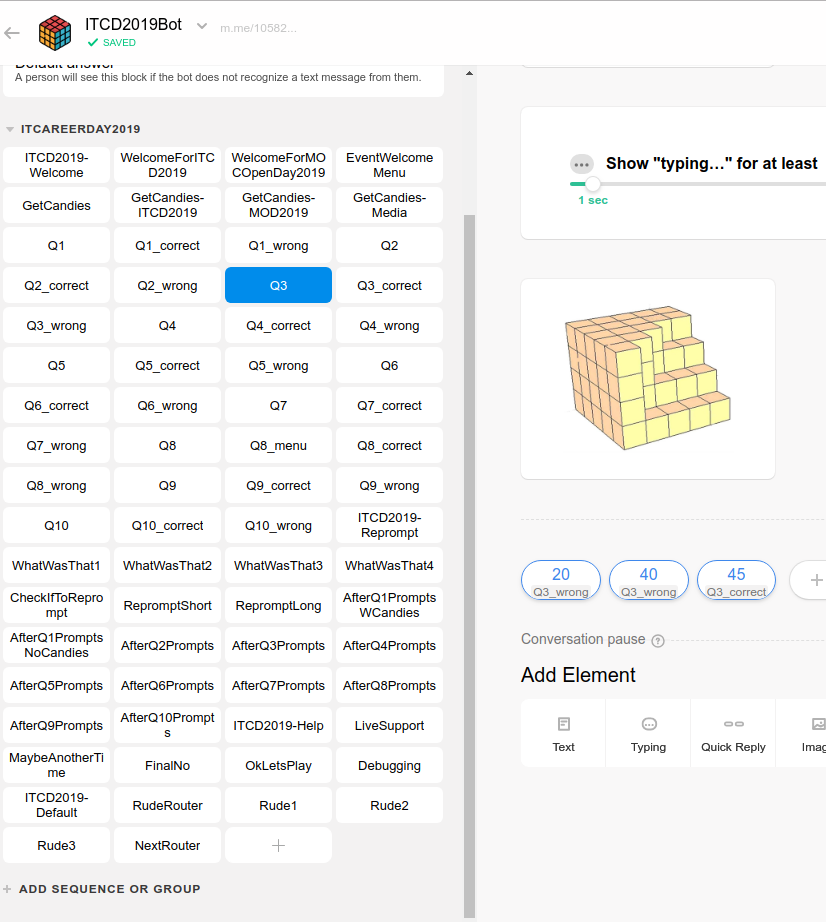

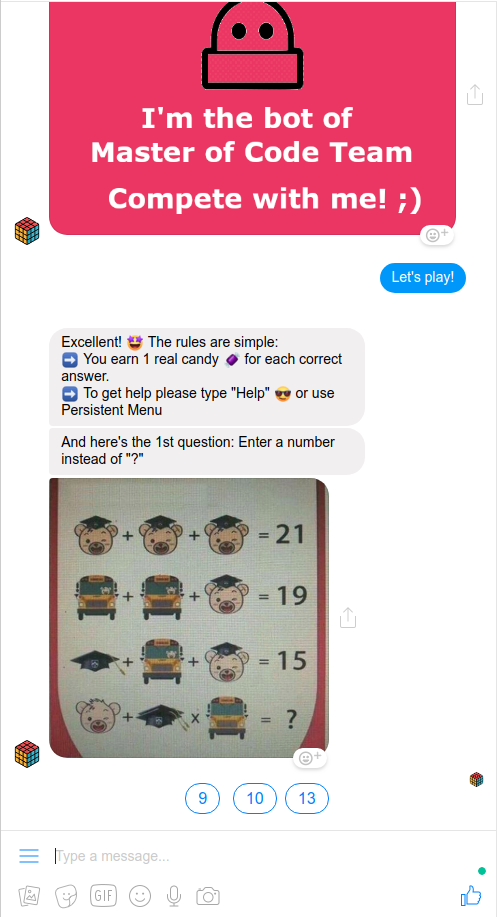

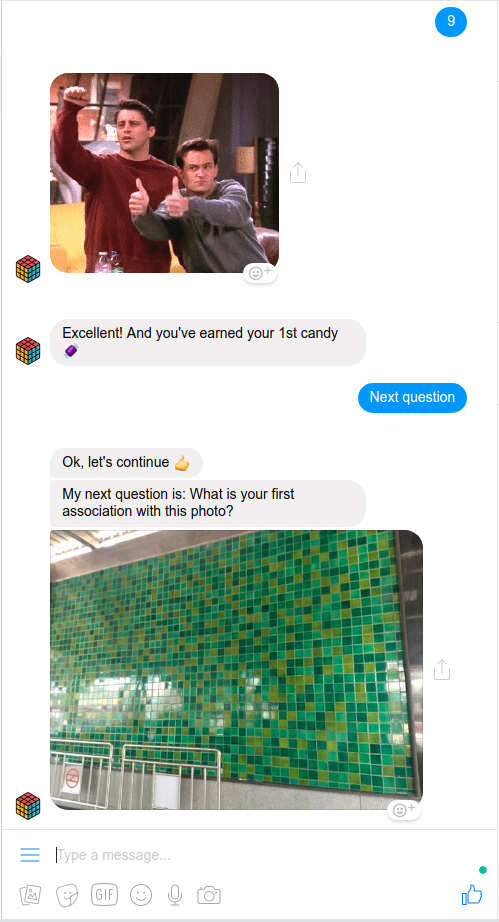

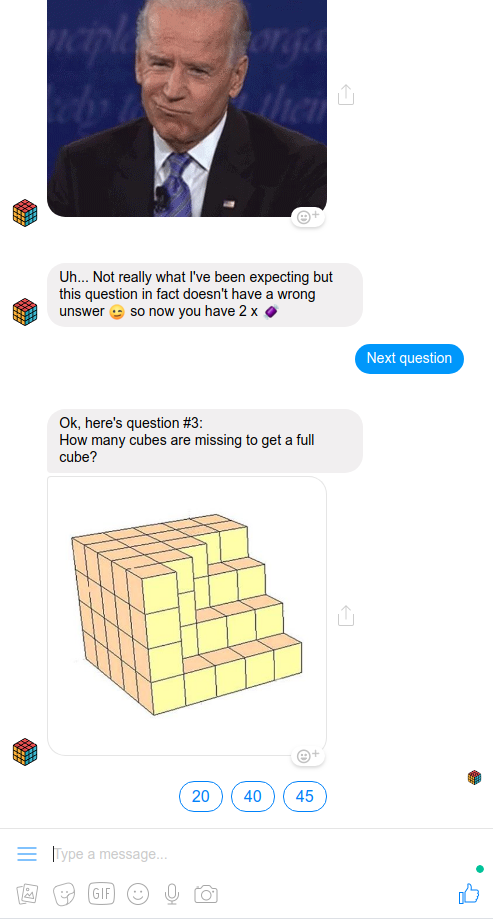

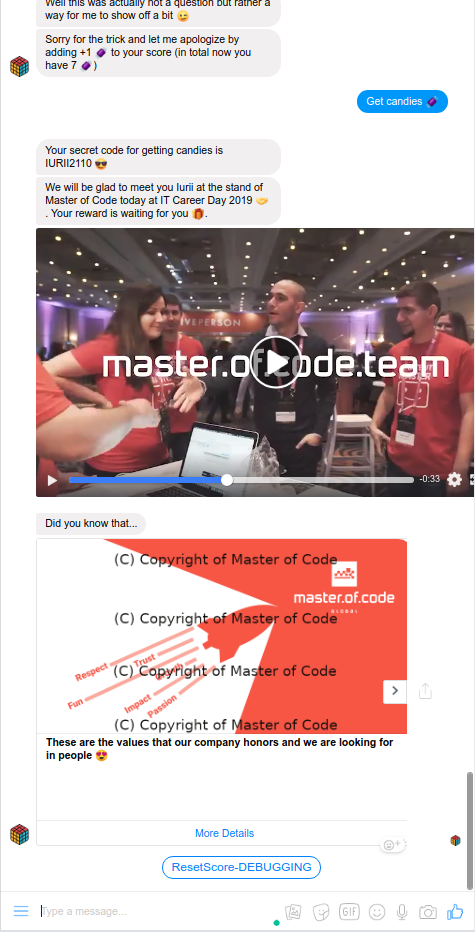

On May 22, 2019, our company co-organized the event called IT Career Day 2019, an annual job fair where companies-members of IT Cluster of our town (Cherkasy, Ukraine) present themselves and promote the IT sphere in general.

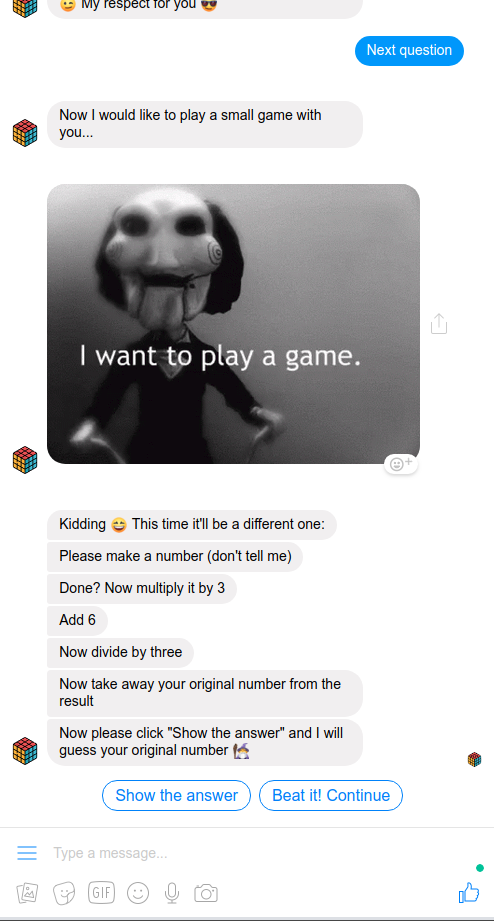

I created a chatbot-quiz for this event where the users could answer questions and win real candies and other prizes (which were given at the Company's booth). Some of the questions were to check user's attentiveness or maths skills but most were about knowing IT life and IT humour ;)

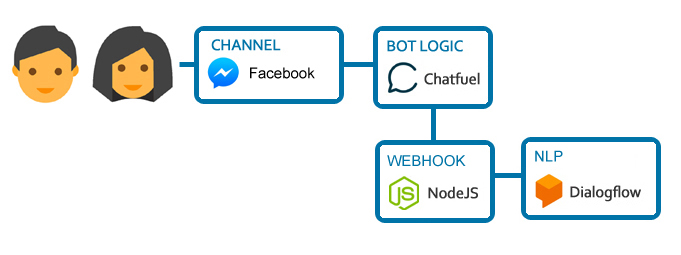

The bot is built on Chatfuel with a custom backend written on node.js and deployed as a Lambda function to AWS. The main purpose of the backend is connecting Dialogflow to Chatfuel (plus also generating verification code and providing some other minor features). The bot uses Chatfuel's built-in export to Google Sheets, send-to-email and human handover functionality.

When the user finishes the quiz and clicks "Get candies" a notification email is sent to admins containing user's info (name, gender, locale, a link to FB profile info etc), the verification code, number of candies that the user has won and also his/her answers to the quiz. Similar results (but without responses to the quiz) are also saved to a Google Sheets document.

Navigation in the bot is possible using quick reply buttons and text commands (thanks to Dialogflow). The bot reprompts the last block of contents in case the user entered something irrelevant or accidentally entered some text so that quick reply buttons disappeared.

Bot launch results: During the event about 150 people played with the bot with ~60% coming to the Company's booth for the prize. The users seem to have liked the bot and also were often surprised when we called them by name even before they introduced themselves ;) (having their name and often a real profile photo received from the bot).

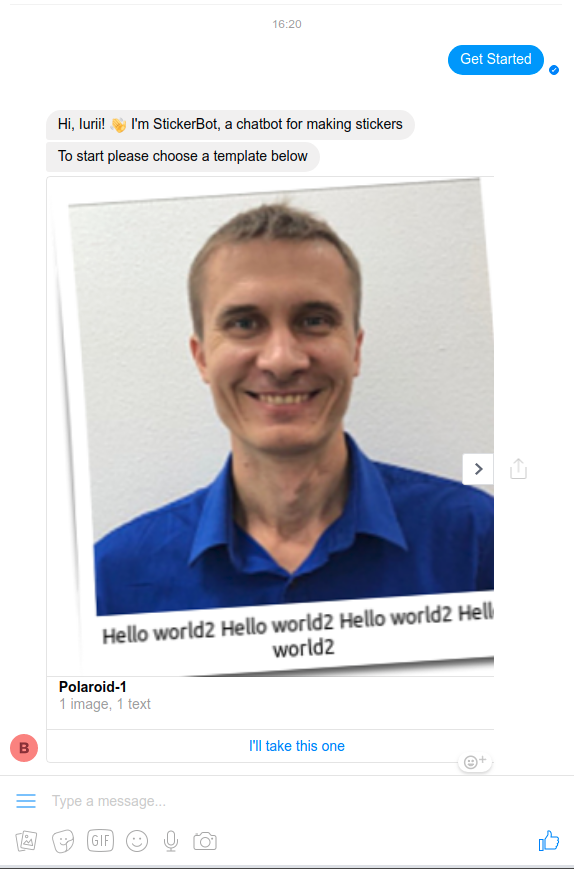

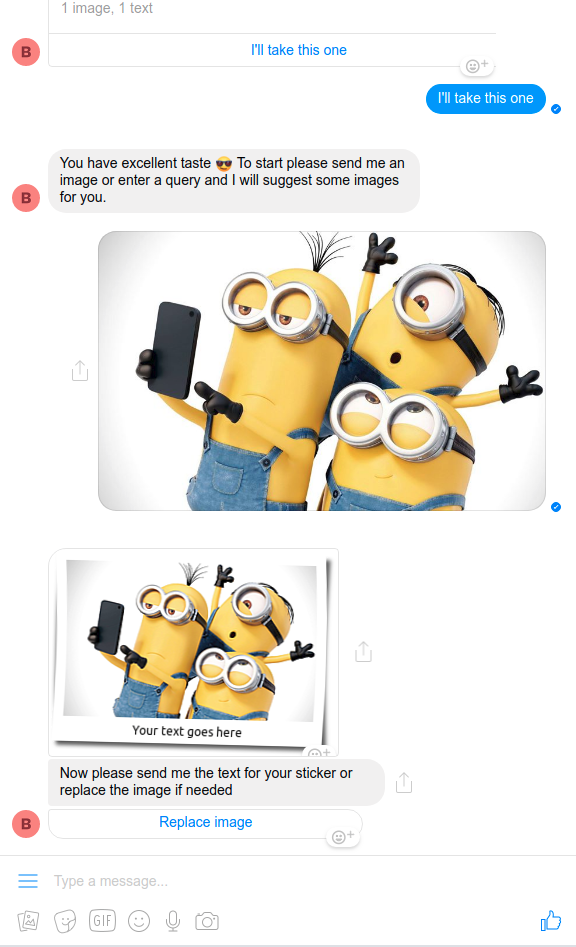

Found some time to get acquainted with ImageMagic. Messenger has powerful built-in drawing capabilities but I thought that it might be good to make a chatbot able to process images according to given templates (e.g. add a company logo or create some stylized stickers for sharing in the conversation). I failed to finish this bot due to time limitations/other more important tasks but got some useful experience and additional practice in chatbot building. Maybe I'll use/reuse it in some other projects later.

The flow was supposed to be the following: the bot greets the user and offers a list of templates to choose from. Only 1 was template was finished - a so-called "Polaroid" (converts a photo into the polaroid-style image with custom text title). Many other templates could be added (e.g. I've thought of "Visa" - upload photo[-s] and indicate a country to get a photo collage with some visa-style stamps/stickers added, "Logo" - adding a company logo or other symbolics to uploaded photos etc). The user chooses a template and then is asked to provide the needed data (photos, titles etc). The source and the final processed images are stored on AWS S3 with links saved for this user in DB (so that one could create own sticker "packages" in Messenger).

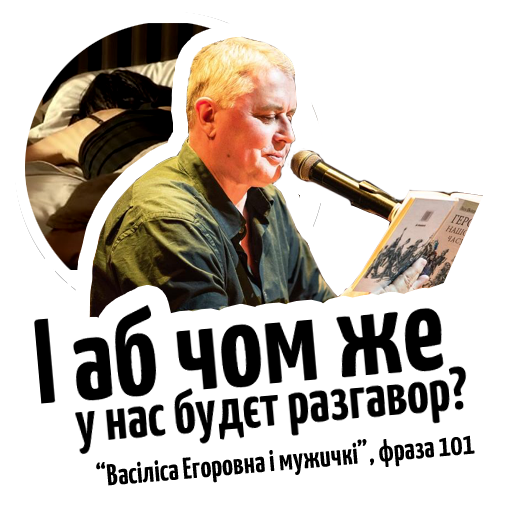

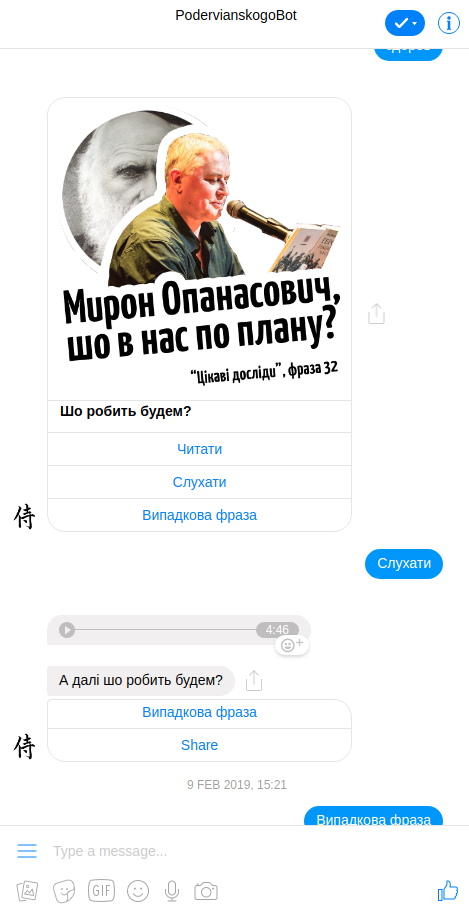

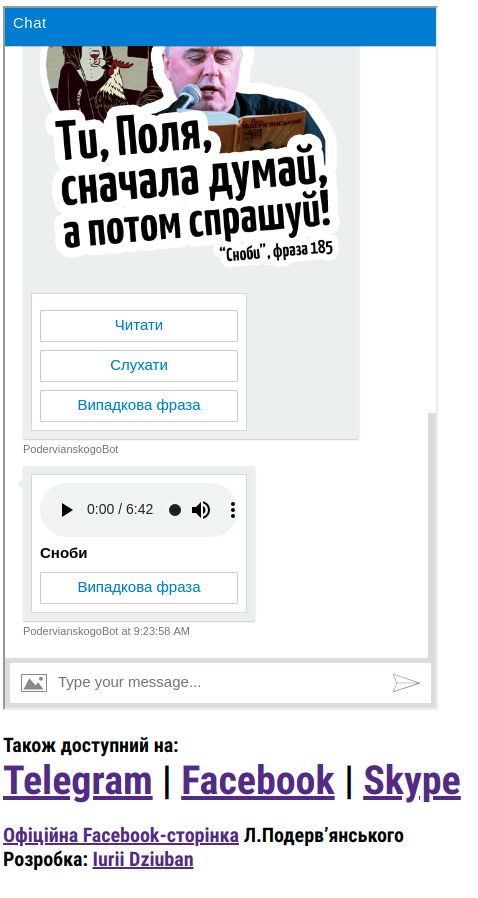

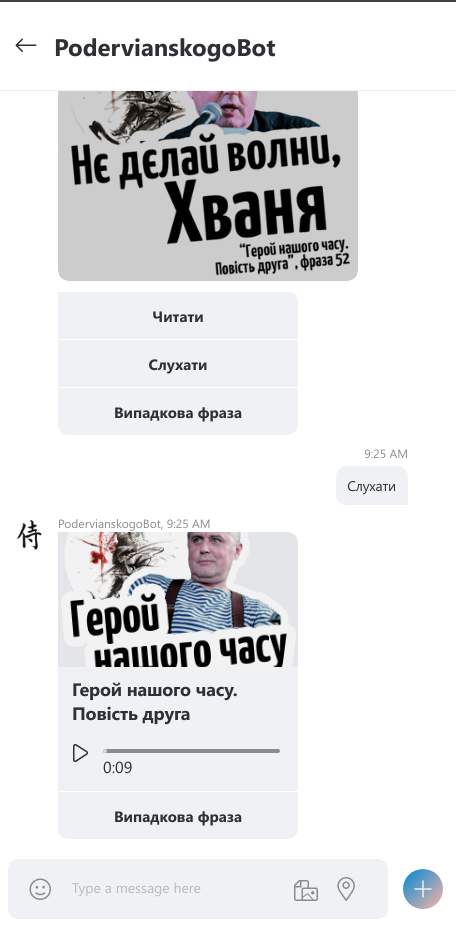

Since my last update of this site I launched my first multi-platform chatbot - Podervianskogobot.com. This is a bot which replies with popular quotes (drawn on stickers) from plays by Les' Poderviansky (the bot is in Ukrainian) and allows to read and listen to respective plays performed by the Author. Les Podervianskyi is a Ukrainian painter, poet, playwright and performer. He is most famous for his absurd, highly satirical, and at times obscene short plays, many quotes from which became popular memes (more on Wikipedia).

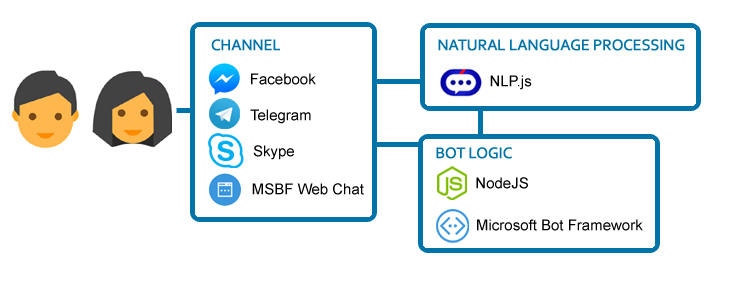

The bot was made using Node.js, Microsoft Bot Framework and npl.js and is available on Facebook, Telegram, Skype Web. Actually, this is the 2nd "generation" of the bot, and the 1st (which wasn't launched and was supposed only for Telegram) was made using Node.js, telegraf wrapper of Telegram API and RiveScript.

I started to work on it last summer (>6 months ago), before I started to cooperate with Master of Code. Thought that it would be funny to make such a bot, and also had a chance to try several new things, mainly RiveScript and npl.js (inspired by this article). This was also my 1st 'live' bot on MS Bot Framework and the 1st bot for Skype and Web.

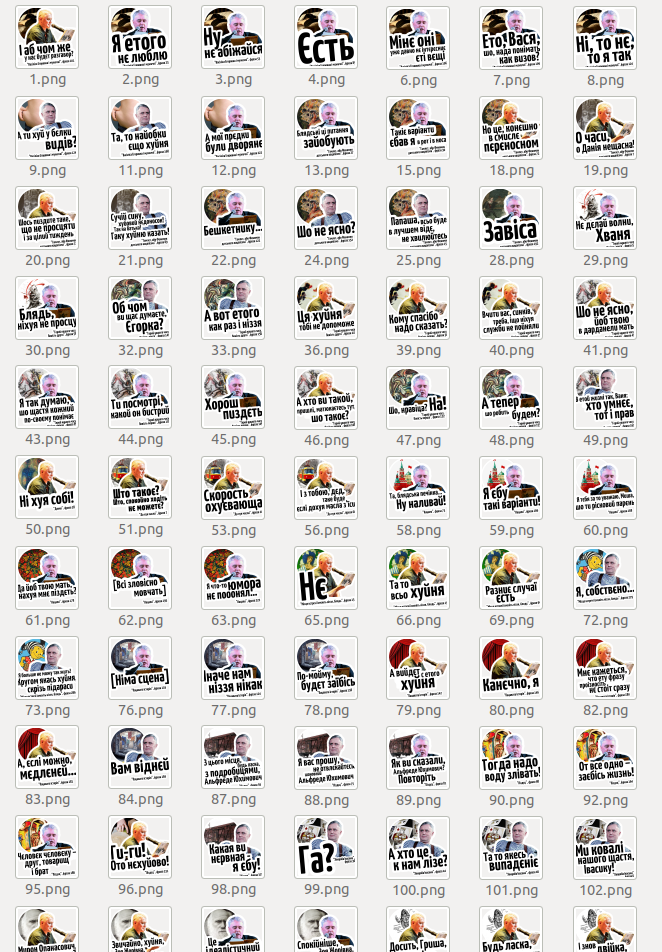

To make this bot I:

- Read through >25 plays by L.P. from this source, chose the most popular quotes (got ~140 of them);

- Took the most popular requests from Dialogflow's Smalltalk and assigned quotes from L.P.'s plays as responses to those requests;

- Contacted with Les Podervianky's representative to discuss copyright moments and got an approval;

- Draw stickers for all those quotes + separate stickers for the plays (~140 in total, this took up to 60% of time working on this project ;);

- Copied, parsed and formatted the texts of the plays, downloaded and prepared the audios.

- Created the bot itself on Node.js using Microsoft Bot Framework for 4 platforms (Telegram, Facebook, Skype, Web). Also wanted to make a version for Viber but their current policy doesn't allow that :( The bot is actually quite simple - after greeting each user's input is "fed" to NLU block which tries to respond with a relevant quote. If no intents are triggered then a simple full-text search is made and the user is presented with a list of plays in which his/her input was found. If no such phrases were found, the user gets a default fallback response.

- In this bot I used an open source library for NLU nlp.js inspired by the above-mentioned article. My conclusion for npl.js - a nice tool and could be used if third-party solutions are not allowed for some reasons but for production I would still use Dialogflow or LUIS.

- Deployed the bot to an AWS EC2 instance. This bot is not using DB and ElasticSearch (thought these could be used and could improve the bot) and thus can be hosted on a single t2.micro instance which is free under the free-tier plan.

So far the bot had about 30 users from Facebook, ~10 from Telegram and a few from Skype and Web version.

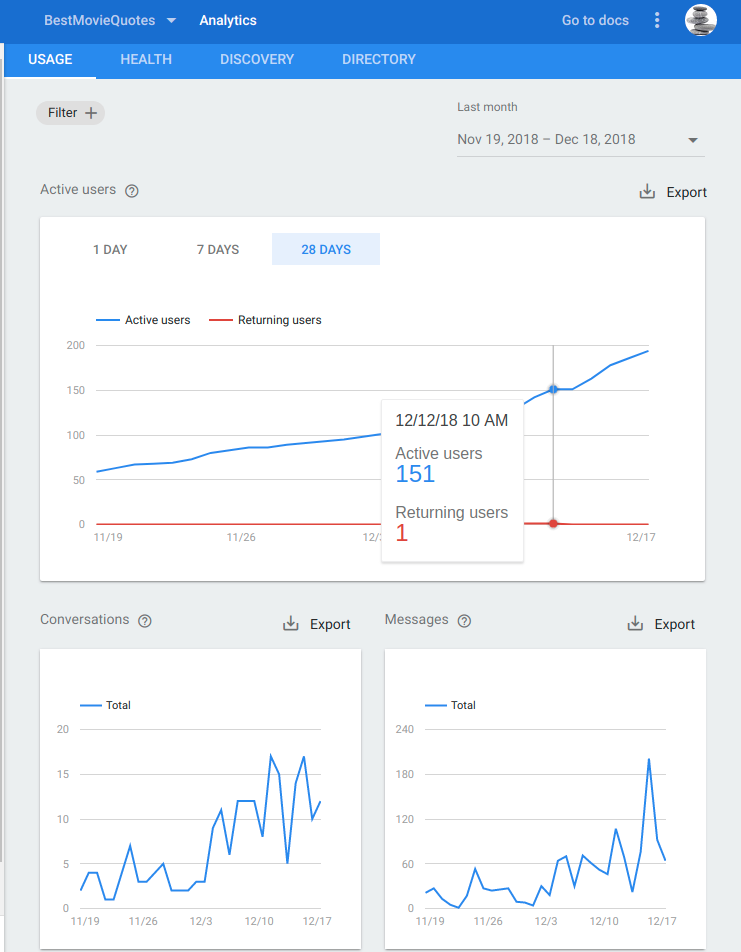

So starting from September 18, 2018, I switched from self-educating in hobby mode 2-4 h/day to building chatbots full-time for Master of Code. So I will probably have less time for my side projects but will try to hold on ;) In October I got acquainted with Actions on Google and built 2 simple voice bots for Google Assistant platform using Dialogflow and Cloud Functions for Firebase. One of these bots, BestMovieQuotes, was approved by Google and is publicly available now (though not for all countries and/or locales - you may need to switch to English as a basic language on your device). So it's a quite simple bot, actually, a stripped down Dialogflow's small talk agent that answers with audio-quotes from famous movies (like "The Godfather", "Casablanka", "The Lord of the Rings", "Titanic" etc.). It gives more or less relevant responses to phrases like 'hello', 'how are you', 'what's up', 'what is life/love', 'bye' etc and you can also ask it for a random quote. You can try it on your smartphone in Google Assistant app (Android, iOS) or on devices like Google Home etc. To invoke the bot please say something like 'Ok Google, talk to Best Movie Quotes' or 'Ask Best Movie Quotes for a random quote'.

P.s. A few words about how I got this bot approved and included into Google Actions directory: it wasn't so straightforward, I succeeded only after 3 tries ;) The problem was that I wanted my bot to conduct a more or less 'natural' talk, listening to user's phrases and responding with relevant quotes. But the guys approving the app wrote that "During our testing, we found that your app would sometimes leave the mic open for the user without any prompt". I tried to prompt the user to continue dialogue using the quote "Talk to me goose" from "Top Gun" which I added after each response but this variant was also rejected. So finally I put an explicit 'robot-read' prompt after each quote - it's not really what I wanted and sounds a bit weird but probably is more correct.

As for the 2nd bot. We had a Halloween party here at MOC, and I also built a simple voice bot especially for this event - CreepySounds. I didn't submit it to Google Actions directory so this bot isn't available publicly. But in case you'd like the idea you may use my code and easily make one for yourself (it should be accessible as a test version on devices where you're logged in). This bot responds to any voice input by a random scary sound (taken from Google sounds library, mainly Horror sounds).

This post is not about another chatbot of mine but about an important event in my coding career: I got hired and now work at Master of Code (FB, www)!

Just a quick summary of my journey to this stage: I'm a biologist by education, last 10 years have been working as an English-Russian medical translator. Married, we have 3 small kids (<6 years old). I started to learn coding 11 months ago, in Oct 2017 when I was 34. I was studying for 2-4 hours a day after my main work and on weekends, ~80 hours/month. By the moment I got a job I have been self-educating for ~840 hours net. I was making small projects (9 in total). Created 8 chatbots. Started from Python but then moved to Node JS. For more info – please see below. I had 2 interviews, both at the company for which I’m working now. Still getting used to the new format of work/life (as of Oct 11, 2018, I’ve been working for 3 weeks). A long and exciting way ahead… ;)

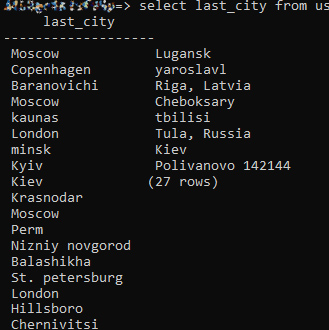

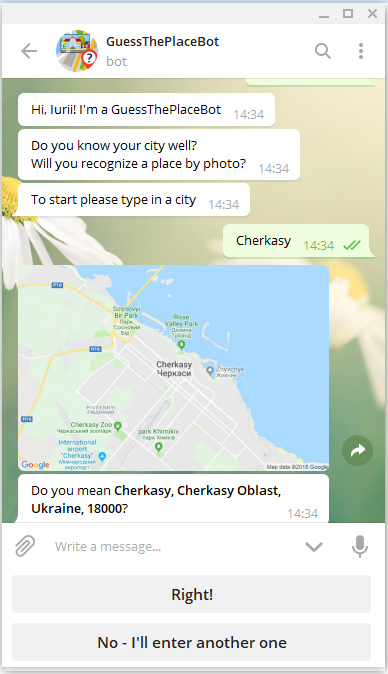

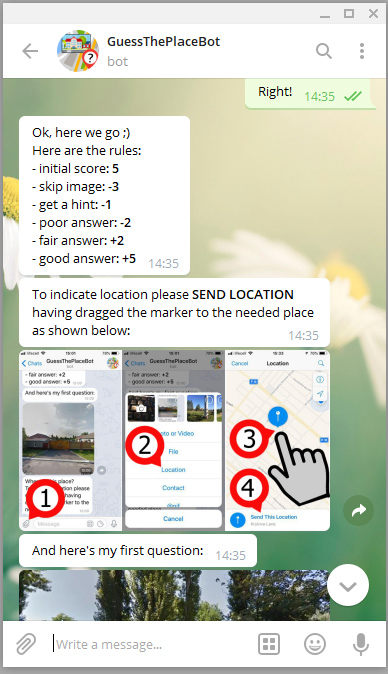

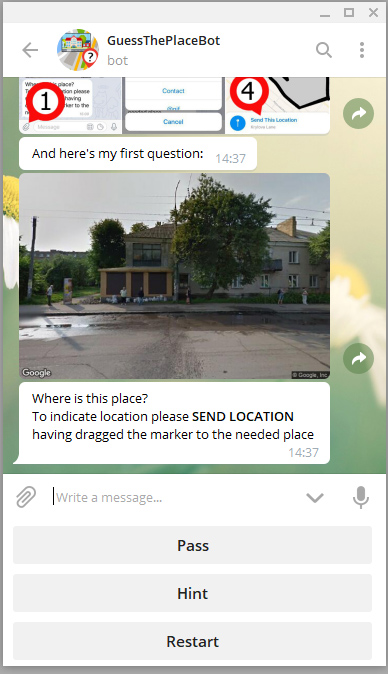

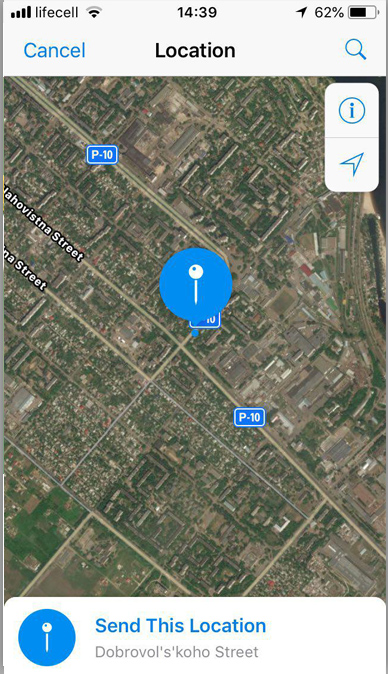

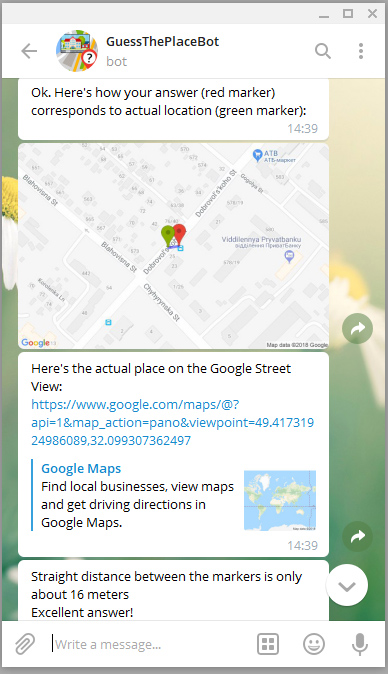

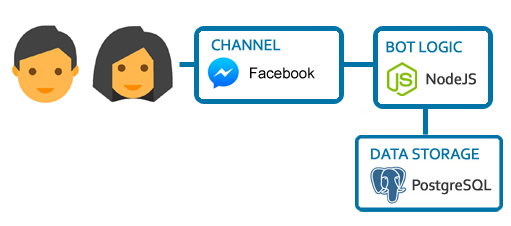

Do you know your city well? Can you recognize its places by street view photos? Play a game with GuessThePlaceBot and check that ;) This is a Telegram bot that asks to identify places in a chosen city by Google Street View images. Built using NodeJS for bot logic, Telegram Bot API (Telegraf wrapper), hosted on AWS Lambda, stores conversation state in PostgreSQL DB (AWS RDS).

Note: Doesn't work on Telegram Desktop or Telegram for Web (platforms limitation - don't allow to send location)

September 30, 2018: Presented the bot in blog findthisplace.d3.ru, getting feedback..

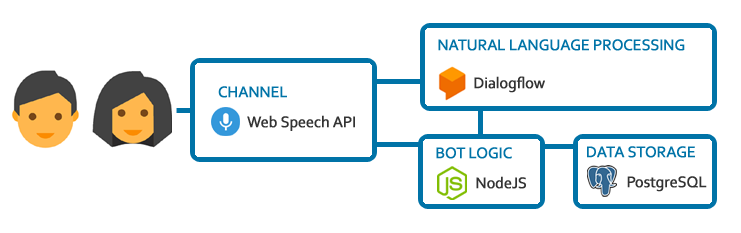

It's always more interesting to learn something on practice ;) So learning PostgreSQL I decided to write a simple bot that tells the beginning of a phrase (for eg., of a proverb) and asks the user to finish it. It uses Dialogflow for conversation construction with webhooks on Node JS, and PostgreSQL for storing questions. But to make this bot a little bit more original I also attached a voice "frontend" written by Jaanus Kase. Give it a try ;) (works in Chrome for desktop or Android; as the webhook is hosted on Heroku and goes to the sleeping mode in 30 min if not used, so the 1st response may take some time or may be empty).

P.s. This bot also understands SQL and the database it works with can be managed through the bot itself (see video; I won't provide the link to the text-only version of this bot though ;)).

FinishPhraseBot

(March-June 2018 [project stopped])

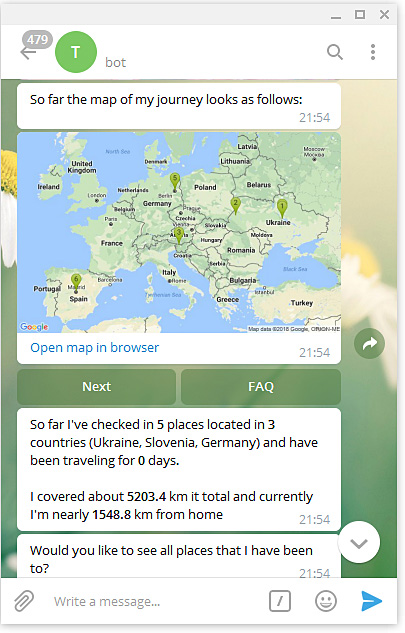

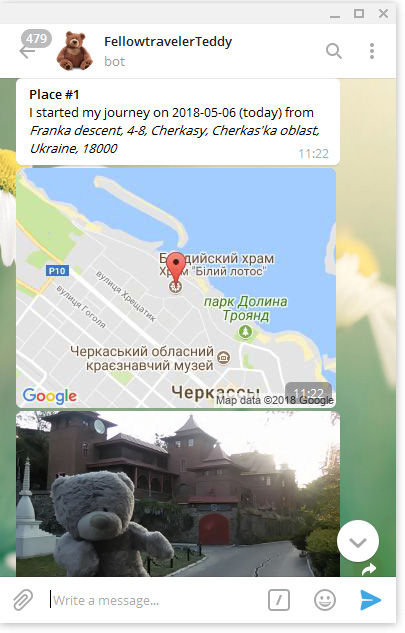

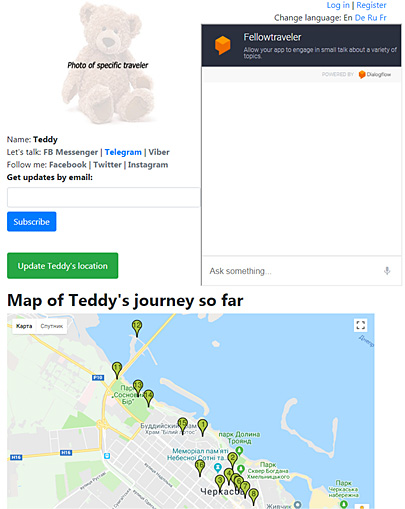

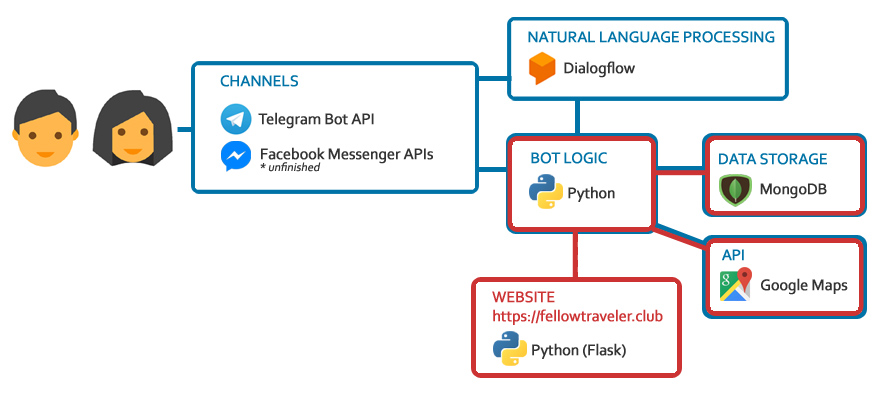

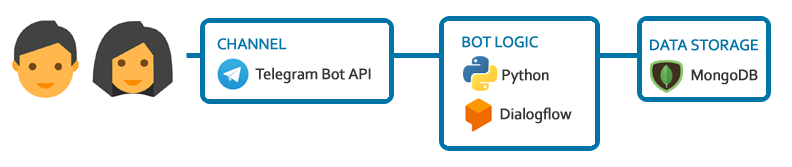

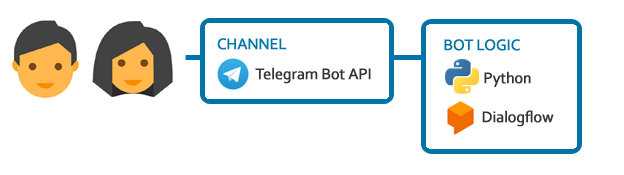

This is supposed to be an entertainment project/social experiment - a toy 'travelling' across the globe being passed between accidental people. Built on Python using Flask, MongoDB and APIs for GoogleMaps, Dialogflow and messengers (Telegram, Facebook). A chatbot (2 integrations - Telegram & Facebook) and a website working with the same database.

Update - 02 June 2018: Website and chatbot for Telegram are almost done. Hope to make a version for FB Messenger and launch the project soon.

Update - 11 July 2018: Due to other training tasks with higher priority I had to pause my work on this project (but still hope to finish it and launch). Website and Telegram bot are almost ready, Facebook bot is ready for ~80%.

Update - 18 December 2018: Eh.. Still, no time to finish this project. Telegram bot and website off. Who knows maybe one day I will rewrite it on node.js (I still like the idea).

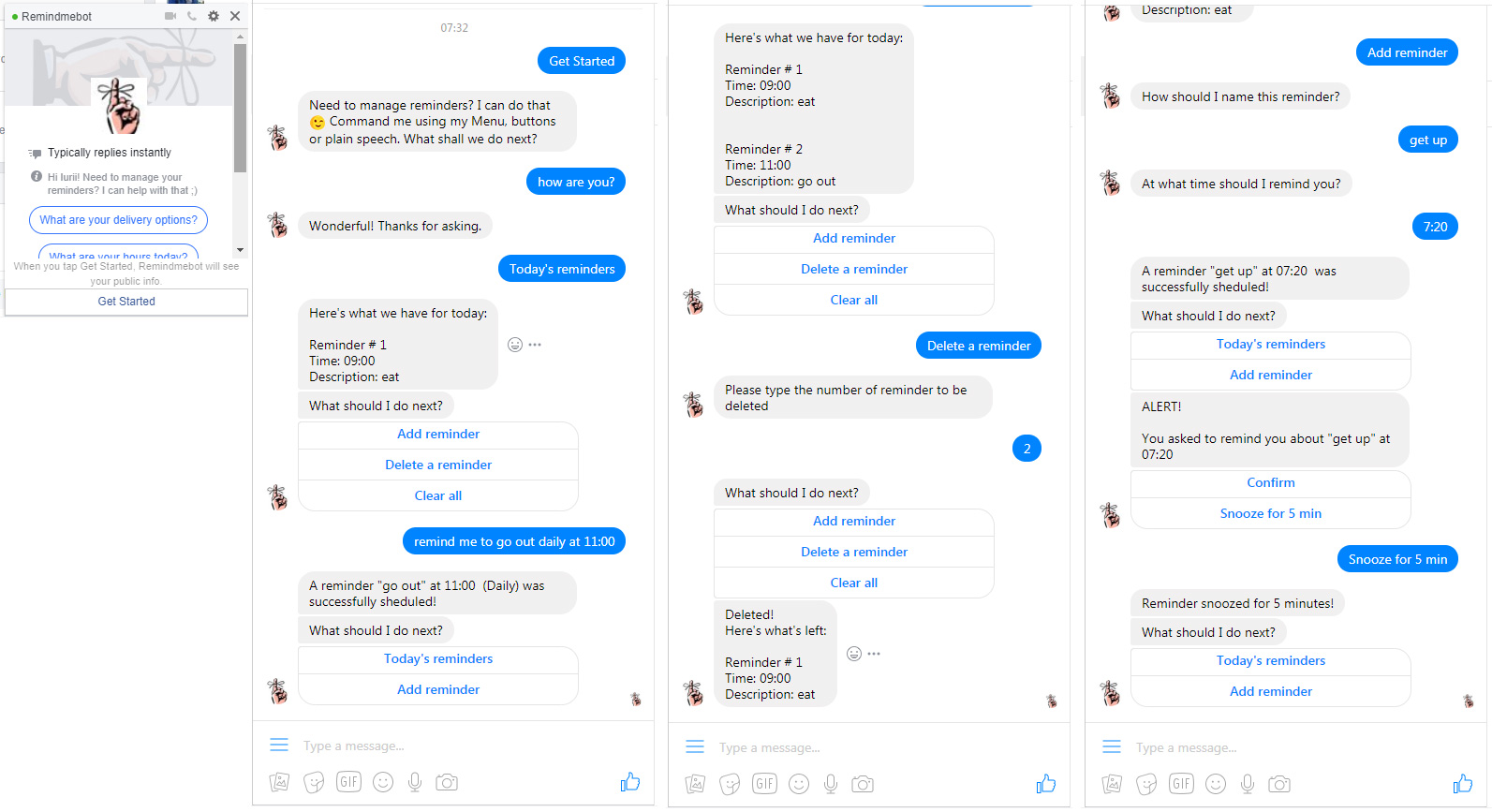

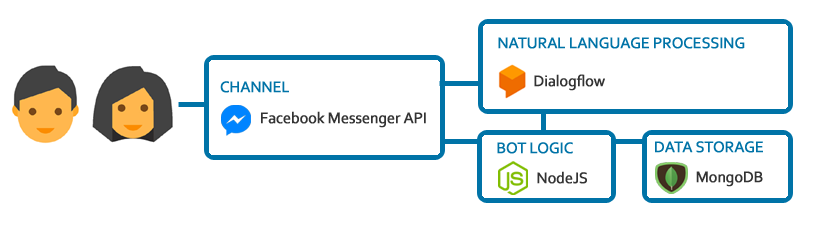

On May 16 2018, I visited IT Career Day 2018 in Cherkasy, a so-called "IT-job fairs", which was the 1st event of such type for me. There I spoke with representatives of several companies. Preparing for this event I looked through websites of main companies and came to know that Master of Code (MOC) is making chatbots. I had a talk with guys from MOC and they suggested me to perform a test task writing a bot on node.js. I took this challenge. Though all my previous projects were on Python, I never coded on Javascript before and in general up to 3/4 of instruments used in this project were new for me, I seem to have coped with the task. You can read a bit more about how I made the way from my first "Hello world" on JS to writing and launching Remindmebot in 60 hours here.

About this chatbot: it's a bot-reminder written on Node.js using Dialogflow and Facebook Messenger APIs and MongoDB. It can create reminders using NLU (for example it can understand phrases like "Remind me to go cycling at 7:00 on Mondays, Wednesdays and Fridays"), delete all or one specific reminder and alert reminders which can be "confirmed" or "snoozed".

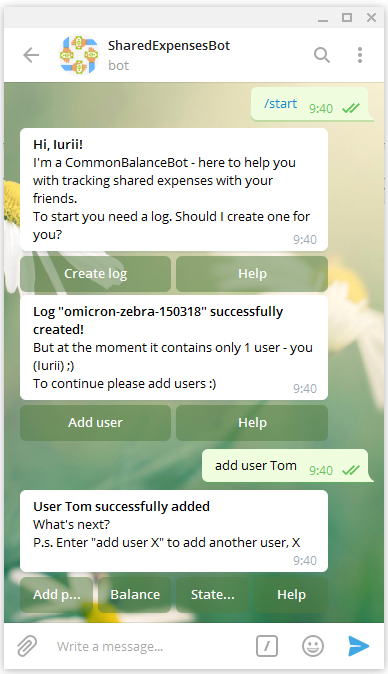

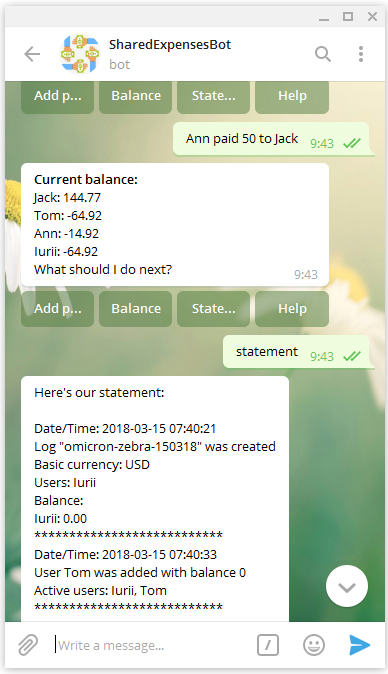

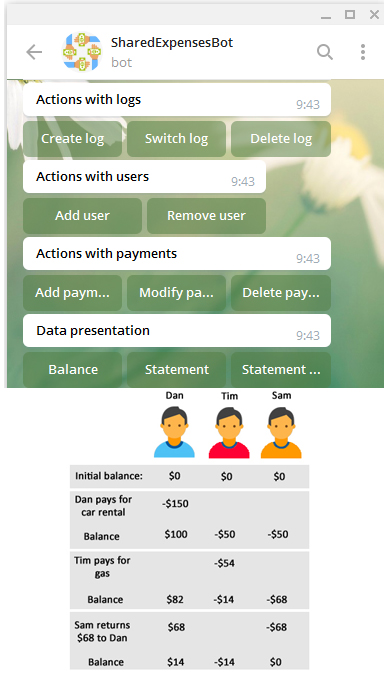

The idea for this training mini-project was suggested by my brother who said that it would be nice to have a chatbot that could help to track shared expenses during travels with friends. For example, when one pays for an apartment, someone else for dinner, the 3rd one for gas, another food/drinks/tickets etc (as an alternative to chipping in with equal sums each time). More detailed information - see github or Dialogflow's forum.

Topics learnt/covered in this project: plain python, MongoDB, dialogflow and telegram/facebook integrations.

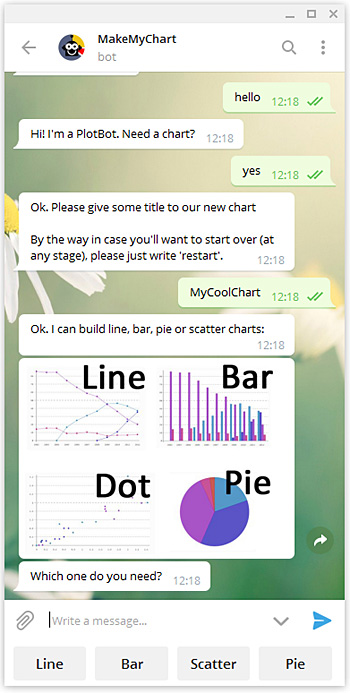

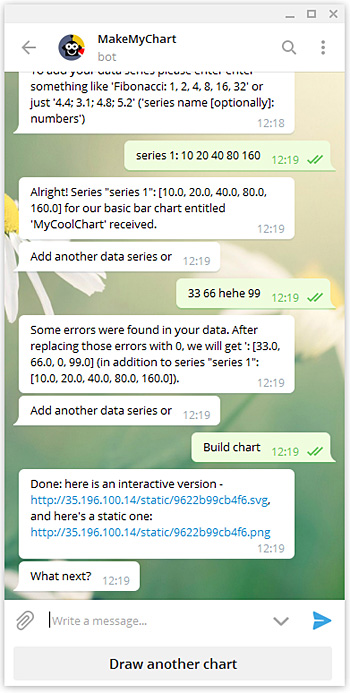

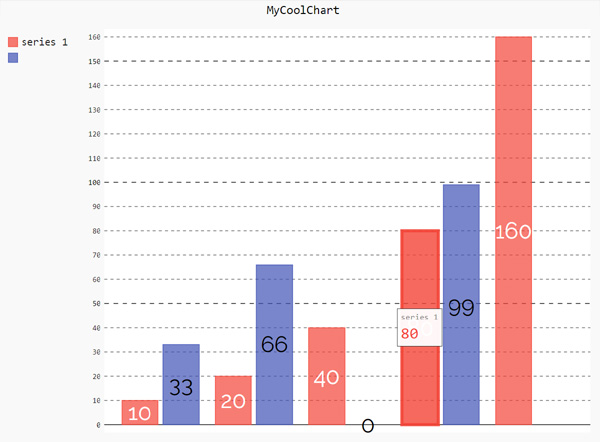

Learning to build chatbots on dialogflow.com platform I decided to create a PlotBot - a chatbot which builds charts (using pygal python library for charting). This chatbot used Telegram, Facebook Messenger and a Web Demo integrations. As of Jan 2020 the the webhook is disabled and the bots are inactive. More detailed information about this chatbot on github or Dialogflow's forum.

Topics learnt/covered in this project: dialogflow, Heroku, pygal, dialogflow and telegram/facebook integration.

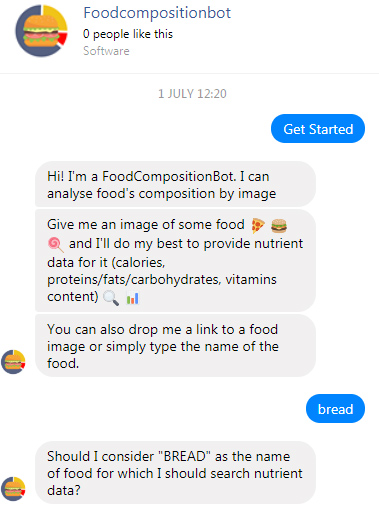

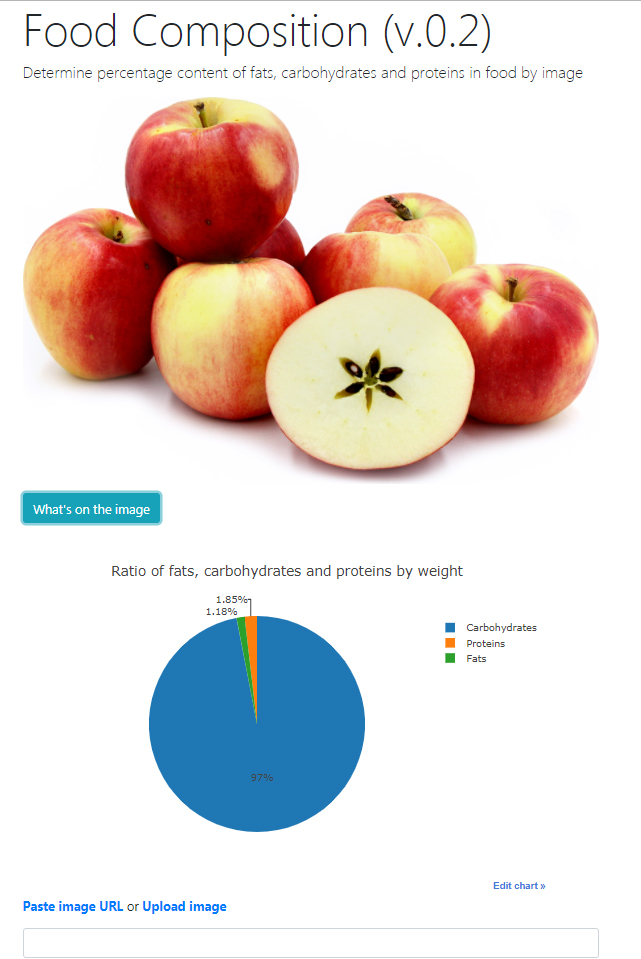

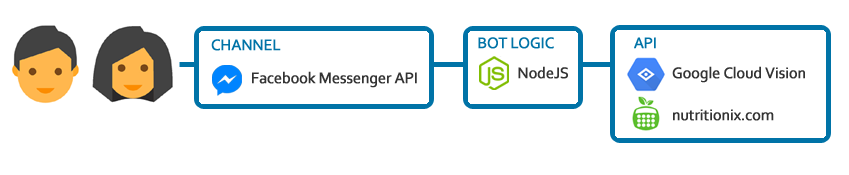

Other, non-chatbot projects

My 2nd real mini-project. Done in Jan 2018. A Flask app that tries to determine an approximate percentage of fats, carbohydrates and proteins in food by image. Made for fun. Still thinking how to filter non-food images ;) Uses Google Vision API and Nutritionix API. Additionally created [what appeared to be my 1st] simple chatbot (see it below on the right) on dialogflow.com platform that can tell fats/carbohydrates/proteins % content for the food user enters (Telegram integration was also used). As of Jan 2020 the the webhook is disabled and the bots are inactive.

Topics learnt/covered in this project: Flask, REST API (Google Cloud Vision API, Nutritionix API), bootstrap, plot.ly, deployment to a server, dialogflow, Heroku.

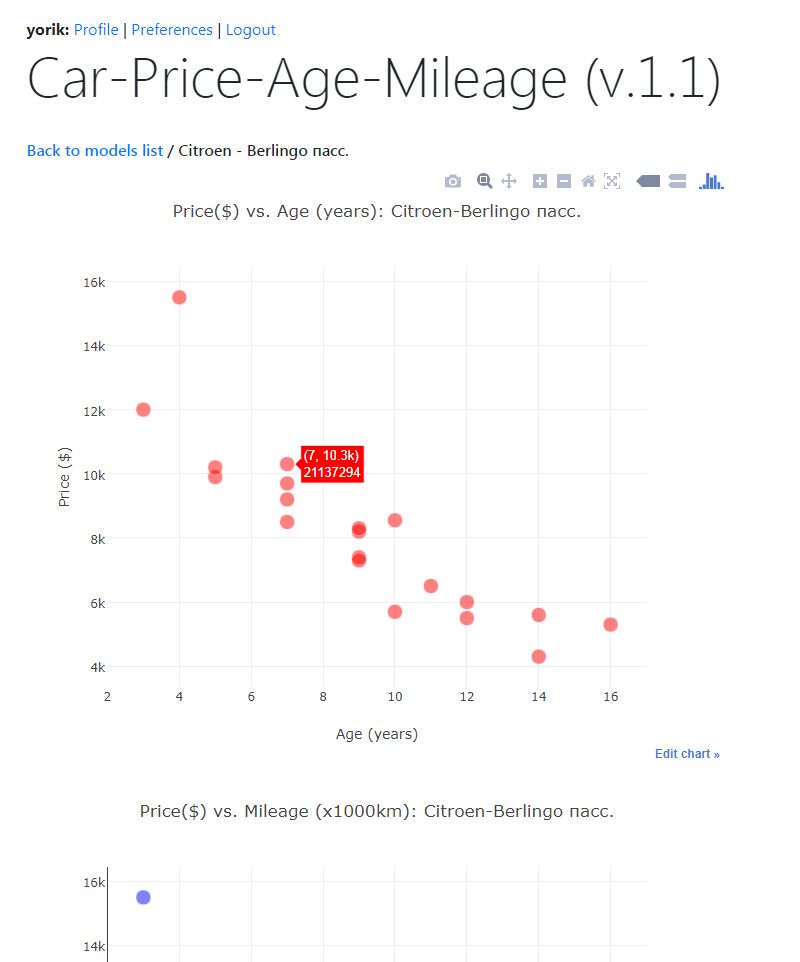

My 1st real mini-project. Started on 01.11.2017 after learning Python/coding for ~175 hours (3-3.5months) in total, done in ~70 hours. A Flask app that allows a user to choose a car model and get scatter charts showing how this car's price is changing depending on its age and mileage. Data is requested from auto.ria.com (the biggest Ukrainian advertisement board for vehicles) using their API. Charts (for age & price, price & mileage and age & mileage) are drawn using 2 charting engines/libraries - pygal and plot.ly. About 50% of time working on this mini-project was spent learning how to create a user management system in Flask (register, login, profile update, password update, password reset, avatar functions). App has a preferences page (accessible for registered and logged in users) where a user can change 2 parameters: advertisements quantity for the model being analyzed (5-50) and the charting engine.

Topics learnt/covered in this project: Flask (including the creation of user management system), vagrant, git, virtualenv, REST API, requests, JSON parsing, charting (pygal, plot.ly), MongoDB, bootstrap, deployment to server.

Knowledge

Here I will be posting links to courses/materials/software and other resources that I’ve covered/worked with (last links go first).

JavaScript Functions, a Pocket Reference // js, javascript, functions

NPM vs Yarn // npm, yarn, node

Non-zero days and other rules for productivity // general, productivity

An Introduction to OAuth 2 // auth

Mixpanel - introduction // mixpanel, logging

14/09/2019 - Visited TedxYouth@Kyiv // general, tedx

Jest - Tutorial //

Jest - Getting started // jest, tests

Dashbot - tracking user data // dashbot, tracking

YAML // yaml

What is Swagger // swagger

15 Tools You Can Use to Beta Test Your Chatbot // chatbot, testing

Attended the 3rd "Tech & Beer" event // tech and beer, meetup

Javascript Classes — Under The Hood // js, javascript

TypeScript documentation/a> // typescript, ts

TypeScript fundamentals - Udemy // typescript, ts, udemy

Lerna (npm package) // lerna, npm, js

Plop (npm package) // plop, npm, js

Chatbots: Your Ultimate Prototyping Tool // chatbot, general

Storytelling And Bot Making // chatbot, general

The Future of AI Is in the Hands of Storytellers // chatbot, general

The conversation designer’s handbook — or how to design chatbots, Google Home actions and Alexa skills that work // chatbots, voicebots, google assistant, alexa, bot

Sample files (media, pdf etc) for development // media examples, general

13 npm Tricks for Faster JavaScript Development // node, npm

AVUXI - partner of Amadeus for points of interest data // hackaton, avuxi, poi

Visited Facebook Developer Circle: Kyiv opening // facebook, general

The 2018 Web Developer Roadmap // general, node

Node.js Best Practices // node, best-practices

Fundamental Node.js Design Patterns // node, patters

Validating Data With JSON-Schema, Part 1 // json schema

Postman - Intro to scripts // postman

Coursera course: Learning How to Learn: Powerful mental tools to help you master tough subjects // learning, general, coursera

loggly.com - logging service // log, logging

STATE OF MESSAGING 2019 // chatbot, conversational solutions

The largest Node.js best practices list (May 2019) // nodejs

The 10 Best Social Media Management Applications // social media

WhatsApp Business API // whatsapp

Overview of the WhatsApp Business API — and how to leverage it // whatsapp

PM2 (Process Manager 2) // pm2, always up, deployment

Understanding Docker as if it were a Game Boy // docker

6 Easy Ways to Prevent Your Heroku Node App From Sleeping // heroku

Chatfuel tutorial to save and read information from Google Sheets using Integromat // chatfuel, integromat

WE SUMMARIZED 14 NLP RESEARCH BREAKTHROUGHS YOU CAN APPLY TO YOUR BUSINESS // nlp, nlu

Socket.io: let’s go to real time! // nodejs, socket, socket.io

Build a simple chat app with node.js and socket.io // nodejs, socket, socket.io

Automatic Question Answering // nlp, nlu

Technology Radar // technology radar, trends

RCS - Rich Conversation Services // rcs

LivePerson Conversation Builder // lp, liveperson, conversation builder

BotCentral // botcentral

Distributed load test using jMeter on multiple AWS EC2 instances // load testing, jmeter, aws ec2, docker

The top 5 software architecture patterns: How to make the right choice // patterns, coding

Arindam Paul - JavaScript VM internals, EventLoop, Async and ScopeChains (Youtube) // nodejs, event loop

AWS Lambda "warming" (1 | 2 | 3) // aws lambda, warming, serverless

Business requests/projects (Ukraine) // projects, business

Writing middleware for use in Express apps // js, express, nodejs, middleware

botpreview.com // prototyping, visual prototype

AWS SQS // sqs, aws sqs, queue

Virtual Assistants and Consumer AI // chatbot, ai, virtual assistant, alexa, google home

Table associations in relational databases // db, postgres, table associations

Facebook Broadcast API // facebook, messenger, broadcast api

Podervianskogobot - getting feedback // podervianskogobot, chatbot, feedback

Go by examples // go, golang

Go Bot Building Library // go, gobbl

Deployment Go apps to Heroku with Git (using Docker containers) // go, heroku, deployment

An intro to dep: How to manage your Golang project dependencies // go

pgAdmin - installation and use // pgAdmin

Dep // go, dep

GoLang, The Next Language to Learn for Developers // go

Apex-Up // aws lambda, serverless, deploy

Everything you need to know about Packages in Go // go

An introduction to programming in Go // go

A tour of Go // go

How to add full text search to your website // full-text search, search

Bunyan - logging library // node, bunyan, logging

Setting Up An HTTPS Server With Node, Amazon EC2, NGINX And Let’s Encrypt // deploy, aws ec2, nginx, let's encrypt

Deploying a Node App on Amazon EC2 // aws, ec2, deploy

Microsoft Bot Framework: sending channel-specific messages // mbf, microsoft bot framework, chatbot

What is Test Driven Development (TDD)? Tutorial with Example // tests, tdd

The Absolute Beginner’s Guide to Test Driven Development, with a Practical Example // tdd, tests

Dialogflow API v2 // nlu, nlp, dialogflow, chatbot

JS - OOP // js, oop

ООП в функциональном стиле // js, oop

Delete remote branch on Github // git

JavaScript: async/await with forEach() // js, async

Tutorial: Using the Messenger Webview to create richer bot-to-user interactions // messenger, webview

The JavaScript this Keyword // js, this

Call stack, event loop and async programming // js, call stack, async

ФУНКЦІОНАЛЬНЕ ПРОГРАМУВАННЯ В JAVASCRIPT // js

Функциональное программирование на Javascript // js

Understanding Asynchronous JavaScript — the Event Loop // js, async

Morgan (npm) //npm, morgan

JOI (npm) // npm, joi

The Absolute Minimum Every Software Developer Absolutely, Positively Must Know About Unicode and Character Sets (No Excuses!) // unicode

JMeter Result Analysis: The Ultimate Guide JMETER RESULT ANALYSIS: THE ULTIMATE GUIDE // jmeter, load testing

Jmeter // jmeter, load testing

Jest: Introduction // test, tdd, jest

Revisiting Node.js Testing: Part 1 // test, tdd

An Overview of JavaScript Testing in 2018 // test, tdd

Eslint + Prettier in Vscode // eslint, prettier

Setting up Eslint + Prettier // eslint, prettier

These tools will help you write clean code // general, eslint, prettier

Build a Search Engine with Node.js and Elasticsearch // elastic search, node

How to Integrate Elasticsearch into Your Node.js Application // elastic search

Elastic Search // elastic search

Implementing a Job Queue with Node.js // job queue, kue

tmux // tmux

Job queue // job queue

Automattic/kue // kue

awesome-nlp // nlp

NLP's ImageNet moment has arrived // nlp

Design Framework for Chatbots // chatbot, design

An easy introduction to Natural Language Processing // nlu, nlp

Command line basics // command line

Build your own Action for Google Assistant // actions on google, dialogflow, chatbot

How To Build Your Own Action For Google Home Using API.AI // actions on google, dialogflow, chatbot

Util.promisify // util.promisify, async, javascript

How To Build Your Own Action For Google Home Using API.AI // google actions

Build your own Action for Google Assistant // google assistant, google actions

DB migrations - Seaquelize // sequelize, migrations

Schema migration // schema migration, db

Fixing SQL Injection: ORM is not enough // orm, sql

Using Redis with Node JS // redis

Improving database performance with Redis // redis

Redis storage adapter for Microsoft BotBuilder // redis, bot builder

Redis // redis

Merging objects in JS // javascript, merging objects

JS: Desctucturing assignment // javascript, desctructuring

Simplify your JavaScript – Use .map(), .reduce(), and .filter() // array functions, javascript

State of npm scripts // npm, npm scripts

Introduction to NPM Scripts // npm, npm scripts

NPM scripts // npm, npm scripts

QnA Maker // azure bot service, qna maker

Project personality chat // azure bot service, project personality chat

Conversation learner // azure bot service, conversation learner

Using dotenv package to create environment variables // dotenv, javascript, node

DotEnv // dotenv, javascript, node

Prettier for VSCode // vs code, prettier, eslint

Azure Bot Service Documentation // chatbot, azure

Microsoft LUIS // luis, chatbot, nl

Setting up ESLint on VS Code with Airbnb JavaScript Style Guide // eslint, airbnb, javascript, vs code

Airbnb JavaScript Style Guide // eslint, airbnb, javascript

5 JavaScript Style Guides — Including AirBnB, GitHub, & Google // javascript, eslint, airbnb

Create a bot with the Bot Builder SDK for Node.js // chatbot, bot framework, node

How to Run Dockerized Apps on Heroku… and it’s pretty sweet // docker, heroku

Dockerize Simple Flask App // docker, flask

These 5 “clean code” tips will dramatically improve your productivity // career, coding

Notes to Myself on Software Engineering // career, coding

Ordinary People Focus on the Outcome. Extraordinary People Focus On the Process // career, general

A beginners guide to Docker // docker

Docker overview // docker

Rivescript-chatbot-lambda // chatbot, rivescript, aws lambda

Giving Facebook Chatbot intelligence with RiveScript, NodeJS server running in AWS Lambda serverless architecture // rivescript, aws lambda, chatbot

RiveScript // rivescript, nlu

Conversational vs Transactional Chatbots // chatbot, nlu, chatscript

RiveScript vs ChatScript + // chatscript, rivescript, nlu

RiveScript vs ChatScript // chatscript, rivescript, nlu

How we built AI Chatbot Using JavaScript and ChatScript // chatscript, nlu

Presented FindThisPlaceBot on d3.ru // findthisplace, chatbot, promotion

ChatScript // chatscript, nlu

A Beginners Guide To Understanding The Agile Method // agile

Serverless Telegram bot on AWS Lambda // chatbot, aws lambda

Amazon RDS for PostgreSQL // amazon, postgresql, aws rds

Google Street View - Developer Guide // google, street view

Google Cloud vs AWS in 2018 (Comparing the Giants) // cloud computing, google, aws

Claudia Bot Builder - easy deployment to AWS Lambda // claudia, aws lambda

The Ultimate Guide To Designing A Chatbot Tech Stack // chatbot, stack

Tutorial: Creating and Using Lambda Functions // amazon lambda, aws

Amazon S3 - Github examples // amazon s3, aws

Amazon S3 // amazon s3, aws

JavaScript ES6 — write less, do more // javascript, es6

Object-Relational Mapping (ORM) is a technique that lets you query and manipulate data from a database using an object-oriented paradigm // sql, orm, sequelize

Sequelize - promise-based ORM for Node.js // sql, orm, sequelize

Machine Learning: how to go from Zero to Hero // machine learning, ml

Interactive fiction in context of chatbots // chatbot, interactive fiction

Работа с базами данных (Node Hero: Глава 5) // node, db

WaveNet: A Generative Model for Raw Audio // chatbot, voicebot, wavenet

Tacotron 2: Generating Human-like Speech from Text // tacotron, chatbot, google

Google Duplex: An AI System for Accomplishing Real-World Tasks Over the Phone // chatbot, voicebot, google duplex

How to build a simple web-based voice bot with api.ai // chatbot, voicebot

A New Approach to Voice User Interfaces // chatbot, voicebot

Connecting to PostgreSQL // postgreSQL

PostgreSQL Tutorial // postgreSQL

PostgreSQL Tutorial // postgreSQL

SQL vs. NoSQL Databases: What’s the Difference? // db, sql, nosql

Relational vs. non-relational databases: Which one is right for you? // db

LivePerson - Sample App // liveperson, chatbot, javascript

LiveEngage Bots FAQs // liveperson, chatbot, javascript

LivePerson: Start Using Bots // liveperson, chatbot, javascript

Callbacks, Promises and Async/Await // promise, async, javascript

6 Reasons Why JavaScript’s Async/Await Blows Promises Away (Tutorial) // nodejs, async

Understand promises before you start using async/await // nodejs, promise, async

Using Async Await in Express with Node 9 // nodejs, async

es6-cheatsheet // javascript, es6

Building a Facebook Chat Bot with Node and Heroku // nodejs, heroku, chatbot

How can I use Google default credentials on Heroku without the JSON file? // google vision api, heroku

Using the Google Cloud Vision API with Node.js // nodejs, google cloud vision

ECMAScript 6 Features // javascript, es6

“Hello World!” app with Node.js and Express // javascript, nodejs, express

Google Fonts // fonts, google, design

Chatbots were the next big thing: what happened? // chatbot

Top Sites for JavaScript Practice Exercises // javascript

JavaScript - Exercises, Practice, Solution // javascript

Gupshup // chatbot

Recast.ai //chatbot

Smooch.io // chatbot

JavaScript (developer.mozilla.org) // javascript

The Ultimate Guide to Chatbots: Why they’re disrupting UX and best practices for building // chatbot, UX

6 Chatbot UX Design ‘Must-haves’ for 2018 // chatbot, UX

Pymessenger2 // chatbot, python, pymessenger2

Choosing better SDK/python wrapper for FB Messenger: 1, 2, 4, 3 // python, chatbot, FB Messenger

How to Create a Facebook Messenger Bot with Python Flask // python, chatbot, FB Messenger

JavaScript Promises: an Introduction // javascript, promise

PYTHON VS NODE.JS: WHICH IS BETTER FOR YOUR PROJECT // articles, python, node.js

Node.js MongoDB // node.js, mongoDB

Building a Facebook Chat Bot with Node and Heroku // chatbot, FB Messenger, node.js, Heroku

How To Create Your Very Own Facebook Messenger Bot with Dialogflow and Node.js In Just One Day // chatbot, FB messenger, node.js, dialogflow

How to Install and Use Node.js and npm (Mac, Windows, Linux) // node.js, npm

“Introduction To JavaScript” course - codecademy.com // javascript

“Hello World!” app with Node.js and Express // javascript, node.js

6 Chatbot UX Design ‘Must-haves’ for 2018 // articles, chatbot

How Businesses are Winning with Chatbots & Ai // articles, chatbot

The 3 Types of Chatbots & How to Determine the Right One for Your Needs // articles, chatbot

Best chatbot platforms to build a chatbot // chatbot, articles

How to Get to 1 Million Users for your Chatbot // chatbot, articles

This is how Chatbots will Kill 99% of Apps // chatbot, articles

10 Tips on Creating an Addictive ChatBot // chatbot, articles

How Businesses are Winning with Chatbots & Ai // chatbot, articles

Google Static Maps API // google maps, static maps api

Do You Want Your Chatbot to Converse in Foreign Languages? My Learnings from Bot Devs // chatbot, i18l, l10n

How to Use ngrok to Test a Local Site // ngrok

Google Maps Distance Matrix API // google maps, distance matrix api

Setting your Telegram Bot WebHook the easy way // telegram, chatbot, webhook

How To Share Localhost To World Using Ngrok // ngrok, deployment

Урок 4. Вебхуки // telegram, chatbot, webhook

Запускаем несколько ботов на одной машине: nginx + CherryPy // chatbot, deployment, nginx

Урок 11. Ведём (более-менее) осмысленные диалоги. Конечные автоматы // telegram, chatbot

Конечный автомат: теория и реализация // chatbot, telegram

Creating a Chatbot with Deep Learning, Python, and TensorFlow p.1-3 // chatbot, deep learning

TelegramBot Analytics - botan.io // telegram, chatbot, analytics

Пишем бота для Telegram на языке Python // telegram, chatbot

Создаём своего первого робота в Telegram при помощи Python 3 // telegram, chatbot

Делаем робота в Telegram: клавиатуры и возможности Inline-режима // telegram, chatbot

7 Live Chat Solutions for Small Businesses // live chat

Telegram бот для службы поддержки (pyTelegramBotAPI) // telegram, chatbot, pyTelegramBotAPI

Embedding Telegram on website to use as Live Chat // telegram, chatbot, web-chat

Request and handle phone number and location with Telegram Bot API // telegram, chatbot

pyTelegramBotAPI // telegram, chatbot, pyTelegramBotAPI

Building Telegram bot Using pyTelegramBotAPI and Flask // telegram, chatbot, python, pyTelegramBotAPI

how-to-create-a-telegram-bot-from-scratch-tutorial (pyTelegramBotAPI) // telegram, chatbot, pytelegrambotapi

different-programming-languages-and-their-fields-of-application // general, articles

Dialogflow API python wrapper (apiai) // dialogflow, nlp, apiai

python-telegram-bot // telegram, chatbot, python-telegram-bot

how-to-create-a-telegram-bot-with-ai-in-30-lines-of-code-in-python (python-telegram-bot) // telegram, chatbot, python-telegram-bot

Dealing with 413 error 'File too big' - nginx on ubuntu, flask // nginx, 413

Nginx: 413 – Request Entity Too Large Error and Solution // nginx, 413

Logging, Flask, and Gunicorn … the Manageable Way // flask, gunicorn, logging

AJAX with jQuery // jquery, ajax

Python Flask jQuery Ajax POST // flask, jquery, ajax

Encoding and Decoding Strings (in Python 3.x) // unicode, encoding

Processing Text Files in Python 3 // unicode, encoding

Unicode HOWTO // unicode, encoding

How to clone git repo into current directory // deployment, git

Getting free SSL certificate - Letsencrypt // ssl, deployment

How to redirect www to non-www - GoogleCloud // deployment, dns

IP address to domain redirection problem in NGINX // deployment, dns

How To Redirect www to Non-www with Apache on Ubuntu 14.04 // deployment, dns

Custom Dialogflow Chatbot using BotUI // chatbot, web chat, botui

Dialogflow V1 API Reference // dialogflow, api

Flask-Babel // flask, flask-babel, l10n, i18n

Top languages to localize your iPhone app and get revenue // l10n, i18n

Localization for Flask Applications // flask, localization, l10n, i18n, internationalization

The Flask Mega-Tutorial, Part XIV: I18n and L10n // flask, localization, l10n, i18n, internationalization

python-twitter: A Python wrapper around the Twitter API // python, twitter

Flask-Session // flask-session, sessions, flask

My Chatbot [web chat for Wordpress] // dialogflow, web chat

Chatbot with Angular 5 & DialogFlow // dialogflow, web chat

Basic html web chat for api.ai dialogflow // dialogflow, web chat

Python Flask jQuery Ajax POST // flask, python, jquery

StoryMap JS // storymap, js, maps, journalism

Place Autocomplete Address Form // google maps, autocomplete

Place Autocomplete // google maps, autocomplete, js

How do you get a query string on Flask? // flask, queries

Flask-JSGlue // flask, JSGlue, js

jQuery posting JSON // python, jquery, js

Send Google Maps marker position to Flask whe dragged // flask, google maps, js

Flask Google Maps (plus: how to write a Flask extension) // flask, google maps

How to get the filename without the extension from a path in Python? // python, file extension

Capturing an Image from the User // image upload, input

Passlib 1.7.1 documentation // flask, passlib

Flask-GoogleMaps 0.2.5 // flask, google maps

reCaptcha setup // reCaptcha

Creating Forms // flask, wtf

CSRF Protection // flask, csrf

How to embed a [Twitter] timeline // twitter

Flask extensions registry // flask, extensions

Share your projects - PlotBot // chatbots, dialogflow

How To Serve Flask Applications with Gunicorn and Nginx on Ubuntu 14.04 // deployment, nginx, unicorn, ubuntu

[Ask Flask] How to deploy multiple apps on a single server using nginx and gunicorn // deployment, nginx, unicorn

Multiple websites on nginx, one IP // deployment, nginx

How To Set Up Nginx Server Blocks (Virtual Hosts) on Ubuntu 14.04 LTS // deployment, ubuntu, nginx

Installing MongoDB and pymongo on Ubuntu 14.04// mongodb, deployment, ubuntu

Operators — MongoDB Manual 3.6 // mongodb

8. Errors and Exceptions // python

Using (and abusing) MongoDB ObjectIds as created-on Timestamps// mongodb

Timestamp and ObjectId in mongoDB // mongodb

Storing data with php - flat file or database? // python, db

Chatbot Developers in Ukraine // chatbot, career

Building a Chatbot using Telegram and Python (Parts 1, 2) // chatbot, python, telegram

Integrating Api.ai Recipe Bot with Telegram// chatbot, dialogflow

Telegram Bot API // chatbot, telegram

The UX of AI // AI, articles

Wildcard for entities // chatbot, entities

Material Design for Bootstrap 4 // mdbootstrap

Optimal context lifespan in DialogFlow (API.AI) // chatbot, dialogflow

Step by step guide to DialogFlow (API.AI) // chatbot, dialogflow

Glitch - like Heroku for webhooks but with code editing // glitch, webhook, chatbot

FB Messenger Platform (general info) // chatbot, facebook messenger

Dialogflow: Python Client // chatbot, dialogflow, python

How to create Webhooks for Dialogflow (Api.ai) // chatbot, dialogflow

DialogFlow (formerly API.AI) webhooks under the hood // chatbot, dialogflow, webhook

Deploying app to Heroku with git // heroku, deployment

Getting images from user in a Chatbot (dialogflow) // chatbot, dialogflow

Making of simple AI Chat Bot using webhook | Python | dialogflow.com | API.AI | ngrok // chatbot, ngrok, webhook

Detecting Intent from Audio // chatbot, speech to text

Conversational datasets to train a chatbot // chatbot

AI Chatbot: NLP and ML Platforms Comparison for Creating Best AI // chatbot, AI

Google Dialogflow - Basics // chatbot, dialogflow

Paralleldots - text analysis APIs // machine learning, text analysis, Paralleldots

My Journey Into Data Science and Bio-Informatics — Part 1: Programming // bioinformatics, python

Improving Airbnb Yield Prediction with Text Mining // text mining, machine learning, airbnb

Problems with installing uwsgi - how to solve // deployment, uwsgi

How To Serve Flask Applications with uWSGI and Nginx on Ubuntu 14.04 // flask, ubuntu, deployment, nginx

Protected directories and Files - Flask Web Development with Python 31 // flask, protected files, sentdex

Return Files with send_file - Flask Web Development with Python 30 // flask, sentdex

Flask Mail - Flask Web Development with Python 29 // flask, flask-mail, sentdex

URL Converters - Flask Web Development with Python 28 // flask, sentdex

Jinja Templating Cont'd - Flask Web Development with Python 27 // flask, Jinja, sentdex

Includes - Flask Web Development with Python 26 // flask, includes, sentdex

Flask Tutorial Web Development with Python 24 - Crontab / Cron jobs // flask, sentdex, cron

Learn X in Y minutes // python

Uploading and validating an image from an URL with Django // python, image validation

Style Guide for Python Code // python, pep-0008

Textbox - sentiment analysis of text // machine learning, API

I trained fake news detection AI with >95% accuracy, and almost went crazy // machine learning, articles

Deployment to virtual machine with Ubuntu // deployment, vm, ubuntu

Google Knowledge graph API (general info) // google knowledge graph, api

Nutritionix API // API, nutrionix

Setting up API and Vision Intro - Google Cloud Python Tutorials p.2 (sentdex) // google cloud, vision API, sentdex

Google Cloud Vision API // google cloud, vision API

Writing Great Python Code // python, standarts

Practical Flask Web Development Tutorials by sentdex // flask, sentdex

Plotly DASH // plotly, DASH

Plot.ly // plotly

Nutritionix API // API, nutrionix

AWS Public Datasets // API

What are some fun API's to play with? // API

How I Secured An Internship With One Amazing Side Project // general, career

Flask-Login // Flask-login, login

Flask Tutorial Web Development with Python 20 - Login Required Decorator Wrapper // Flask, decorators, login

Flask Tutorial Web Development with Python 10 - Message Flashing // Flask, flash

Message Flashing // Flask, flash

Flask Tutorial Web Development with Python - User Registration - 1, 1, 3 // Flask, registration

Step 13 - Working with Forms in Flask // Flask, forms

Flask Tutorial Web Development with Python 19 - user login system // Flask, login

Flask Tutorial Web Development with Python 12 - GET & POST // Flask, Get, Post

Flask Redirects (301, 302 HTTP responses) // Flask, redirects

The Flask Request // Flask, request

HTML <form> // html, form

How to Get Your First Developer Job in 4 Months // general, career

Flask-Quickstart: Sessions // cookies, sessions

Cookies and Sessions // cookies, sessions

What is the difference between Sessions and Cookies? // cookies, sessions

HTTP cookies // cookies, sessions

Using PSCP to transfer files securely // deployment, pscp

Developing and deploying Python apps using pip and virtualenv // deployment, virtualenv, git, pscp

Bootstrap - Introduction // bootstrap, frontend

Miscellaniours articles on Quora, including 1, 2, 3, 4, 5, 6, 7, 8 // general, career

Introduction to working with MongoDB and PyMongo // mongodb, python

Python Driver (PyMongo) // pymongo, mongodb, python

Getting Started with MongoDB (MongoDB Shell Edition)

Mongobooster // mongodb, mongobooster, ide

Install MongoDB Community Edition on Ubuntu (Vagrant) // mongodb, vagrant

Pygal - Sexy python charting // pygal, charting, python

JSON Library // json, parsing

Requests: HTTP for Humans // requests, python

Principles of good RESTful API Design // api

Flask Minimal Application // flask

Virtualenv // virtualenv

PyCharm // pycharm, ide

Git Tutorial – Codeschool // git

“Learn Git” course - codecademy.com // git

What is VCS? (Git-SCM) • Git Basics #1 //git

How Does the Internet Work? // general info, internet

CS75 (Summer 2012) Lecture 0 HTTP Harvard Web Development David Malan // general info, http

How the Web works // general info, internet

How does the Internet work? // general info, internet

How To Set Up SSH Keys // ssh

Understanding SSH Key Pairs // ssh

SSH Key // ssh

DevOps BootCamp: Packages, Software, Libraries // unix, libraries

DevOps BootCamp: Files – UNIX // files, unix

DevOps BootCamp: Users, Groups, Permissions - UNIX / users, unix

“Learn the Command Line” course - codecademy.com // command line

DevOps BootCamp: Shell Navigation // command line, shell, unix

DevOps BootCamp: Operating Systems // general info, os

Vagrant (install, setup, boxes, ssh, networking etc) // vagrant

Virtualbox // virtualbox

Python coding practice - rosalind.info (28 tasks) (account, github) // python, bioinformatics

Python coding practice - codewars.com (10 tasks) (account, github) // python

Python coding practice - practicepython.org (27 tasks) (github) // python

Python coding practice - coderbyte.com (10 tasks) (account, github) // python

Python coding practice - codingbat.com (account) // python

“Learn Python” course - codecademy.com // python

Interests/About me

I’m 36, I was born and live in Ukraine (Cherkasy). I came to coding from biology (have a master’s degree in human physiology, unfinished PhD; while studying at school and university was a winner of All-Ukrainian Biological Olympiads). I have >10-years of experience as an English>Russian medical translator (TA Medconsult), translated for Novartis, Pfizer, Roche, Bristol-Myers Squibb, Sanofi, Regeneron Pharmaceuticals etc. Passed a 2-month internship in a molecular biology lab INSERM U963 / CNRS UPR9022 (Strasbourg, France).Learning to code since October 2017. From September 18, 2018 work as a chatbot developer (node.js) at Master of Code.

I’m married, we have 3 kids (born in 2012, 2014 & 2014), trying to keep a more or less healthy balance between work and family/life. I like mounting biking – Strava (log in needed).